Recent Posts

Archives

Are you targeting the wrong people? Your model might be to blame!

A practitioner’s guide to Propensity Models and Look-alike Models

There is a version of this story that plays out at almost every growth-stage company I have encountered: the marketing team is running campaigns, the data team has shipped a model, conversion rates are “okay,” and yet the cost per acquisition keeps climbing. Leadership asks for better targeting and the data team rushes to build “something more sophisticated”. And still, the needle barely moves.

I have learnt that the problem, more often than not, is not a lack of models. It is a lack of clarity about what each model is actually solving for.

In modern digital marketing, two modelling approaches dominate the conversation around audience intelligence: propensity models and look-alike models. Both are powerful and legitimate. And sadly, both are routinely misapplied. Sometimes simultaneously in ways that quietly erode marketing ROI while making dashboards look healthy on the surface.

This article is my attempt to cut through that confusion. I am going to explain both frameworks from first principles, in plain terms as I possibly can. I will then show where they diverge strategically, where they complement each other operationally, and how teams across industries in fintech, e-commerce, telecom, and SaaS are applying them in practice. But because I don’t want to bog you down with too many details, make sure to come back for part 2.

Along the way, I will point out the mistakes I see most often, because knowing what not to do is just as valuable as knowing what to do. Let’s start with a framing that I find clarifies everything.

The two questions every marketing team is really asking:

Strip away the jargon and you will find that virtually every targeting challenge in marketing reduces to one of two questions:

Question A: “Among the people I already know (my customers, my users, my subscribers), who is most likely to do what I want them to do next?”

Question B: “Among all the people in the world I don’t yet know, who is most likely to become a great customer if I reach them?”

These are genuinely different questions, they operate on different data and require different modelling logic. These questions serve different stages of the customer journey and yet they are treated interchangeably far more often than they should be.

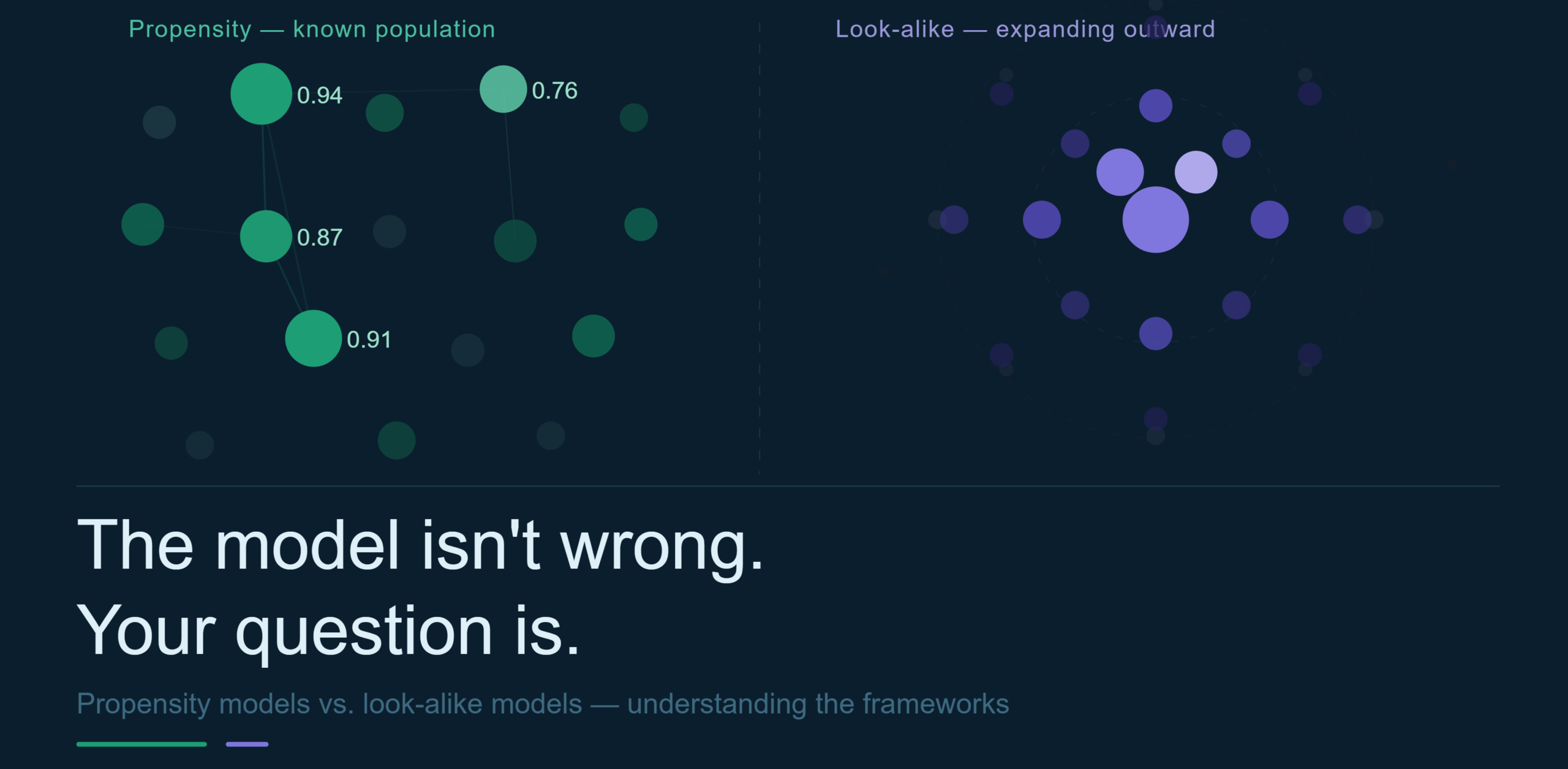

Propensity models answer Question A. Look-alike models answer Question B.

Once you hold that distinction clearly in your mind, almost everything else in this article will feel intuitive.

What Is a Propensity Model?

A propensity model is a machine learning system that assigns a probability score to every individual in a known population, estimating how likely each person is to perform a specific action within a specific timeframe.

The word “propensity” describes a natural tendency or inclination. And that is exactly what the model is trying to surface: not who did something in the past, but who is inclined to do something in the future, based on behavioral patterns we can observe today.

The output is always a number between 0 and 1. A score of 0.87 means the model believes there is an 87% probability that this customer will take the target action. A score of 0.09 means almost no likelihood at all. From there, marketers typically rank the entire population by score and focus their resources on the top decile, top quintile, or whatever threshold their budget and channel capacity dictates.

How it actually works:

The engine behind a propensity model is a classification task. You have historical records of people who did take an action (positive examples) and people who didn’t (negative examples). The model learns the patterns that distinguish the two groups and applies that learned logic to score the current population.

The quality of the model therefore, lives and dies on three things:

- the quality of the label (what exactly counts as a conversion?)

- the richness of the features (what signals does the model have access to?)

- the discipline of the validation (are you testing on data from the future, not the past?)

Feature engineering is where experienced practitioners earn their keep. For a retail bank, useful signals might include how long ago a customer last logged in, whether their balance has been trending upward over the past three months, and whether they hold only one product or multiple. For a SaaS company, it might be daily active usage rate, the number of team members they have invited, and whether they have accessed a premium feature on a free trial. For a telecom, it might be roaming frequency, average monthly spend trend, and whether they’ve contacted customer support in the past 30 days.

None of these features individually tells the whole story. The model finds the combinations that matter which is the signal in the noise.

The algorithms commonly used range from logistic regression (fast, interpretable, regulatory-friendly) to gradient boosted trees like XGBoost (more powerful on tabular data, handles missing values gracefully) to deep learning architectures for sequential transaction data. The right choice depends on the data volume, the regulatory context, and how much interpretability is required downstream.

Where propensity models live in the wild

- Retention and churn prevention. This is the most mature and widely deployed application. A telecom company scores its entire subscriber base monthly on churn risk. The top 5% most at-risk customers receive proactive outreach like a loyalty offer, a call from an account manager, a targeted upgrade. The bottom 50% get nothing, which is also a deliberate, data-driven decision.

- Cross-sell and upsell. A neobank knows which current account holders are most likely to open a savings pot or take out a personal loan this quarter. Instead of emailing everyone, they score the base and run campaigns that target the top 20%. Conversion rates then triple. Email unsubscribe rates drop. The model has, in effect, made the marketing feel like good timing rather than spam.

- Product adoption after launch. When a SaaS company ships a new feature, they do not blast it to every user. They score the base on adoption propensity, that is, who has exhibited the kinds of behaviors that historically precede feature uptake, and they sequence the rollout. Early adopters get access first and laggards get it later, sometimes paired with education content.

- Credit risk and collections. In fintech and lending, propensity models can predict the likelihood of default or late payment. It can allow lenders to intervene early by restructuring terms, triggering early collections contact, or adjusting credit limits before a small problem becomes an unrecoverable one.

- In-app personalization. Real-time propensity scoring, fed by streaming pipelines, powers the next-best-action engine inside a product. When a customer lands on a savings dashboard after receiving a salary credit, the system knows from a model that scored them milliseconds ago, that now is the optimal moment to surface a “Set a savings goal” prompt.

What Propensity Models do well — and where they fall short

Strengths:

- These models are highly precise; they operate on people you know deeply

- Measurable; holdout experiments give you clean lift estimates

- Iteratively improvable; you get more labeled data every day.

- They also tend to be more defensible in regulated industries because you can explain what drove a particular score using tools like SHAP values.

Limitations:

- A propensity model cannot discover new customers. It can only work on people already in your database.

- The population ceiling is your existing user base. If that base is small, or if it skews toward a particular demographic, the model’s outputs inherit those constraints.

- You cannot propensity-score your way to growth from a standing start.

What Is a Look-alike Model?

A look-alike model is a system that takes a group of people you already know and values; your best customers, your highest-LTV subscribers, your most engaged users; and finds statistically similar people in a much larger, external population that you have not yet reached.

The logic for this model is deliberately expansion. You are not trying to predict behavior from history. You are trying to find structural resemblance across a population, then bet that people who look like your best customers will become good customers if you reach them with the right message.

Think of it as reverse-engineering your ideal customer profile at scale, then letting that profile go hunting in the wild.

How it actually works

The process starts with a seed audience. This is a carefully curated list of your highest-value existing customers. “Highest-value” can mean different things depending on context:

- longest tenure

- highest lifetime revenue

- best retention rates

- highest engagement frequency, or

- some composite score that captures multiple dimensions of customer quality.

Getting the seed right is the most important and most underappreciated step. A seed that mixes your best customers with average ones will produce a look-alike audience that resembles, average customers. Basically what I mean is, the signal you put in determines the signal you get out.

Once the seed is defined, the model characterizes it. On a platform like Meta, this means the system analyzes hundreds of demographic, behavioral, and interest attributes associated with those users and builds a multidimensional profile of what the seed “looks like” in the platform’s data universe. In a programmatic environment, similar profiling happens against device behavior, content consumption patterns, and interest graphs. In a data clean room, it happens through privacy by preserving matching against third-party data signals.

The model then scores every other individual in the broader population on their similarity to the seed. Technically, this involves distance or similarity metrics like cosine similarity, Euclidean distance in an embedding space, or collaborative filtering logic which is applied to feature vectors representing each user. The most similar individuals are then ranked to the top and form the look-alike audience.

Most platforms offer a “reach expansion” dial which tightens the similarity threshold and you get a smaller, more precise audience. Loosen it and you get more reach but lower fidelity.

For expensive, high-consideration products like a premium bank account, an enterprise SaaS plan, the precision almost always wins. For awareness campaigns where volume matters more than intent, looser expansion makes sense.

Where Look-alike Models live in the wild

- Paid acquisition campaigns. This is the dominant use case. An e-commerce brand with 50,000 loyal customers uploads that list to Meta, creates a look-alike audience of 2 million similar people across the platform, and runs a prospecting campaign. The resulting cost per acquisition is typically lower than broad targeting because the audience has been pre-filtered for structural similarity to people who’ve already proven they will buy.

- Geographic expansion. A fintech company that has succeeded in one market wants to enter a new country. It uploads its best customers from market one, adapts the model to the demographic and behavioral norms of market two (an important and often skipped step), and uses the resulting look-alike audience to prioritize which users to target during launch.

- App install campaigns. Mobile-first brands like retail investment apps, grocery delivery platforms, digital wallets use look-alike audiences across Meta, Google UAC, and Apple Search Ads to drive installs from people who resemble their highest-retention users, not just their highest-volume installers.

- Audience discovery for new product lines. When a brand launches a product that targets a segment they have not served before, they may not yet have a relevant internal customer base to propensity-score. A look-alike model built from a small early adopter cohort can expand reach while the brand builds up enough first-party data to eventually power propensity scoring.

- B2B SaaS prospecting. Look-alike logic applies in B2B too, where it is applied at the company level rather than the individual level. A SaaS platform identifies its top 100 customer companies by ARR and expansion behavior, profiles them by industry vertical, employee count, technology stack, and growth signals, then finds companies that match that profile in a prospecting database like ZoomInfo or LinkedIn Sales Navigator.

What Look-alike Models do well — and where they fall short

Strengths:

- The core strength is scale and discovery. Look-alike modelling lets you move beyond the walled garden of your existing customer base and reach people you would never have found through manual segmentation.

- When seeded well, they reliably outperform broad demographic targeting in acquisition campaigns.

- They are accessible to teams that do not have massive internal data science resources; the platforms do the heavy lifting.

Limitations:

- You are working with the platform’s data, not your own, which means the similarity logic is partially a black box and may not reflect the behavioral dimensions you actually care about most.

- Attribution is genuinely difficult as look-alike audiences operate at the top of the funnel, and the conversion eventually happens through other channels, making it hard to isolate the model’s contribution.

- The seed quality problem means that if your existing customer base is itself skewed (i.e. geographically concentrated, demographic-heavy in one direction), your look-alike audience will replicate those biases at scale.

The core differences: prediction vs. resemblance

These two models are often conflated because they both involve machine learning, produce audiences, and are used to improve marketing efficiency. Conflating them is like confusing a cardiologist and a genetics counselor because both wear white coats and both want to keep you healthy. Different problem, different tools, different data, different outputs.

Here is how the conceptual architecture of each model plays out across the dimensions that matter in practice:

| Dimension | Propensity Model | Look-alike Model |

|---|---|---|

| Core question | Who among my known users will act? | Who in the world resembles my best users? |

| Data domain | Your first-party behavioral data | Seed (yours) + platform/third-party data |

| Population | Known, existing customers | Unknown, external prospects |

| Funnel stage | Mid-to-lower funnel | Upper-to-mid funnel |

| Marketing goal | Retain, cross-sell, upsell, activate | Acquire, prospect, expand reach |

| Output | Individual probability score (0–1) | Ranked audience segment by similarity |

| Primary channel | CRM, email, in-app, paid remarketing | Paid social, programmatic, video |

| Measurability | Clean via holdout experiments | Harder; requires geo tests or MMM |

| Reach ceiling | Fixed (your existing base) | Scalable (the platform’s universe) |

| Regulatory risk | High (model risk, explainability) | Medium-high (consent, data provenance) |

Propensity models are about prediction from depth. They know a great deal about a small, known population and use that depth to forecast individual future behavior. The intelligence is rich and precise.

Look-alike models are about inference from resemblance. They know less about each individual but operate across a vast external population, using structural similarity as a proxy for behavioral alignment. The intelligence is wide and probabilistic.

Neither model is superior. They occupy different positions in the marketing intelligence stack. Therefore, the most effective teams treat them not as competing tools but as instruments tuned for different jobs and know instinctively which job they are facing before they reach for either one.

✦ Through my data lens ✦

Most teams do not have a modelling problem. They have a question-definition problem. They build sophisticated machinery and models to answer questions they have not actually asked clearly, and then wonder why the output does not move the business.

Before you open a single IDE, try writing the question on a whiteboard in plain language. “Who among my existing customers is likely to upgrade within 60 days?” or “Who in the world looks like my top 500 customers?” If you can’t write it in one sentence without jargon, then you are not ready to model it yet.

Clarity of a question is the leverage point that most data teams skip. Don’t skip it 😊